Deploy a K8s Cluster from Scratch to DCE 5.0 Community¶

This article covers the installation of DCE 5.0 Community from scratch in a 3-node cluster, including details on Kubernetes cluster, dependencies, networking, storage, and more considerations.

Note

The installation methods described in this article may differ from the latest version due to rapid version iterations. Please refer to the installation instructions in the product documentation for the most up-to-date information.

Cluster Planning¶

The example uses 3 UCloud VM instances with the following configurations: 8 cores and 16GB RAM.

| Role | Hostname | Operating System | IP Address | Configuration |

|---|---|---|---|---|

| control-plane | k8s-master01 | CentOS 8.3 | 10.23.* | 8 cores, 16GB RAM, 200GB system disk |

| worker-node | k8s-work01 | CentOS 8.3 | 10.23.* | 8 cores, 16GB RAM, 200GB system disk |

| worker-node | k8s-work02 | CentOS 8.3 | 10.23.* | 8 cores, 16GB RAM, 200GB system disk |

The components used in this example are:

- Kubernetes: 1.25.8

- CRI: containerd (as Docker is no longer directly supported in newer versions of Kubernetes)

- CNI: Calico

- StorageClass: local-path

- DCE 5.0 Community: v0.18.1

Prepare Nodes¶

The following operations are necessary before installation.

Node Configuration¶

Perform the following steps on each of the 3 nodes.

-

Configure the hostname. Modify the hostname to avoid hostname conflicts.

hostnamectl set-hostname k8s-master01 hostnamectl set-hostname k8s-work01 hostnamectl set-hostname k8s-work02It is recommended to exit the SSH session after modifying the hostname and then log in again to display the new hostname.

-

Disable Swap

-

Disable Firewall (optional)

-

Set kernel parameters and enable iptables to handle bridged traffic

Load the

br_netfiltermodule:cat <<EOF | tee /etc/modules-load.d/kubernetes.conf br_netfilter EOF # Load the modules sudo modprobe overlay sudo modprobe br_netfilterModify kernel parameters such as

ip_forwardandbridge-nf-call-iptables:

Install Container Runtime (containerd)¶

-

If using CentOS 8.x, uninstall the pre-installed Podman to avoid version conflicts.

-

Install dependencies

-

Install containerd. You can use either the binary or yum package (yum package is maintained by the Docker community, and this example uses the yum package).

sudo yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo sudo yum makecache yum install containerd.io -y ctr -v # (1)!- Verify the installed version, e.g., ctr containerd.io 1.6.20

-

Modify the containerd configuration file

# Delete the default config.toml to avoid errors with kubeadm later on: CRI v1 runtime API is not implemented for endpoint mv /etc/containerd/config.toml /etc/containerd/config.toml.old # Reinitialize the configuration sudo containerd config default | sudo tee /etc/containerd/config.toml # Update the configuration: Use systemd as the cgroup driver and replace the pause image address sed -i 's/SystemdCgroup\ =\ false/SystemdCgroup\ =\ true/' /etc/containerd/config.toml sed -i 's/k8s.gcr.io\/pause/k8s-gcr.m.daocloud.io\/pause/g' /etc/containerd/config.toml # Old pause address sed -i 's/registry.k8s.io\/pause/k8s-gcr.m.daocloud.io\/pause/g' /etc/containerd/config.toml sudo systemctl daemon-reload sudo systemctl restart containerd sudo systemctl enable containerd -

Install CNI (optional)

-

Install nerdctl (optional)

curl -LO https://github.com/containerd/nerdctl/releases/download/v1.2.1/nerdctl-1.2.1-linux-amd64.tar.gz tar xzvf nerdctl-1.2.1-linux-amd64.tar.gz mv nerdctl /usr/local/bin nerdctl -n k8s.io ps # (1)!- View containers

Install Kubernetes Cluster¶

Install Kubernetes Binary Components¶

Perform the following steps on all three nodes:

-

Install the Kubernetes software repository (using Aliyun's mirror for acceleration)

cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/ enabled=1 gpgcheck=1 repo_gpgcheck=1 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF -

Set SELinux to permissive mode (equivalent to disabling it)

-

Install Kubernetes components (use version 1.25.8 as an example)

Install the First Master Node using kubeadm¶

-

Pre-download the images for faster installation using DaoCloud's image repository

-

Initialize the first node using kubeadm (using DaoCloud's image repository)

Note

The Pod CIDR should not overlap with the IP address range of the physical network of the host machine (this CIDR will need to be consistent with the Calico configuration in the future).

sudo kubeadm init --kubernetes-version=v1.25.8 --image-repository=k8s-gcr.m.daocloud.io --pod-network-cidr=192.168.0.0/16After about ten minutes, you will see the following successful output (remember to note down the

kubeadm joincommand and the corresponding token, as they will be used later on.🔥)Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config Alternatively, if you are the root user, you can run: export KUBECONFIG=/etc/kubernetes/admin.conf You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ Then you can join any number of worker nodes by running the following on each as root: kubeadm join 10.23.207.16:6443 --token p4vw62.shjjzm1ce3fza6q7 \ --discovery-token-ca-cert-hash sha256:cb1946b96502cbd2826c52959d0400b6e214e06cc8462cdd13c1cb1dc6aa8155 -

Configure the kubeconfig file for easier management of the cluster using kubectl

mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config kubectl get no # (1)!- You will see the first node, but it will still be NotReady

-

Install CNI, using Calico as an example

Please refer to the official installation guide for the correct installation method. Refer to the official Calico installation documentation

-

Download the Calico deployment manifest:

-

Use the following command to accelerate image pulling:

-

Install Calico using the following command:

-

Wait for the deployment to succeed:

- Wait for all Pods to be Running

- You will see the first node become ready

Note

Before you install Calico, create a

calico-systemnamespace in advance. Please refer to Create Namespace. -

Add Additional Worker Nodes¶

Finally, execute the join command on the other worker nodes. When executing kubeadm init on the master node, the following command will be printed on the screen (note that all three parameters are environment-specific and should not be copied directly):

After successfully joining, the output will be similar to:

This node has joined the cluster:

* Certificate signing request was sent to the apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

On the master node, confirm that all nodes have been added and wait for them to become Ready.

Install Default Storage CSI (Using Local Storage)¶

# Reference: https://github.com/rancher/local-path-provisioner

wget https://raw.githubusercontent.com/rancher/local-path-provisioner/v0.0.24/deploy/local-path-storage.yaml

sed -i "s/image: rancher/image: docker.m.daocloud.io\/rancher/g" local-path-storage.yaml # (1)!

sed -i "s/image: busybox/image: docker.m.daocloud.io\/busybox/g" local-path-storage.yaml

kubectl apply -f local-path-storage.yaml

kubectl get po -n local-path-storage -w # (2)!

# Set local-path as the default StorageClass

kubectl patch storageclass local-path -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'

kubectl get sc # (3)!

- Replace docker.io with the actual image

- Wait for all Pods to be running

- You will see something like: local-path (default)

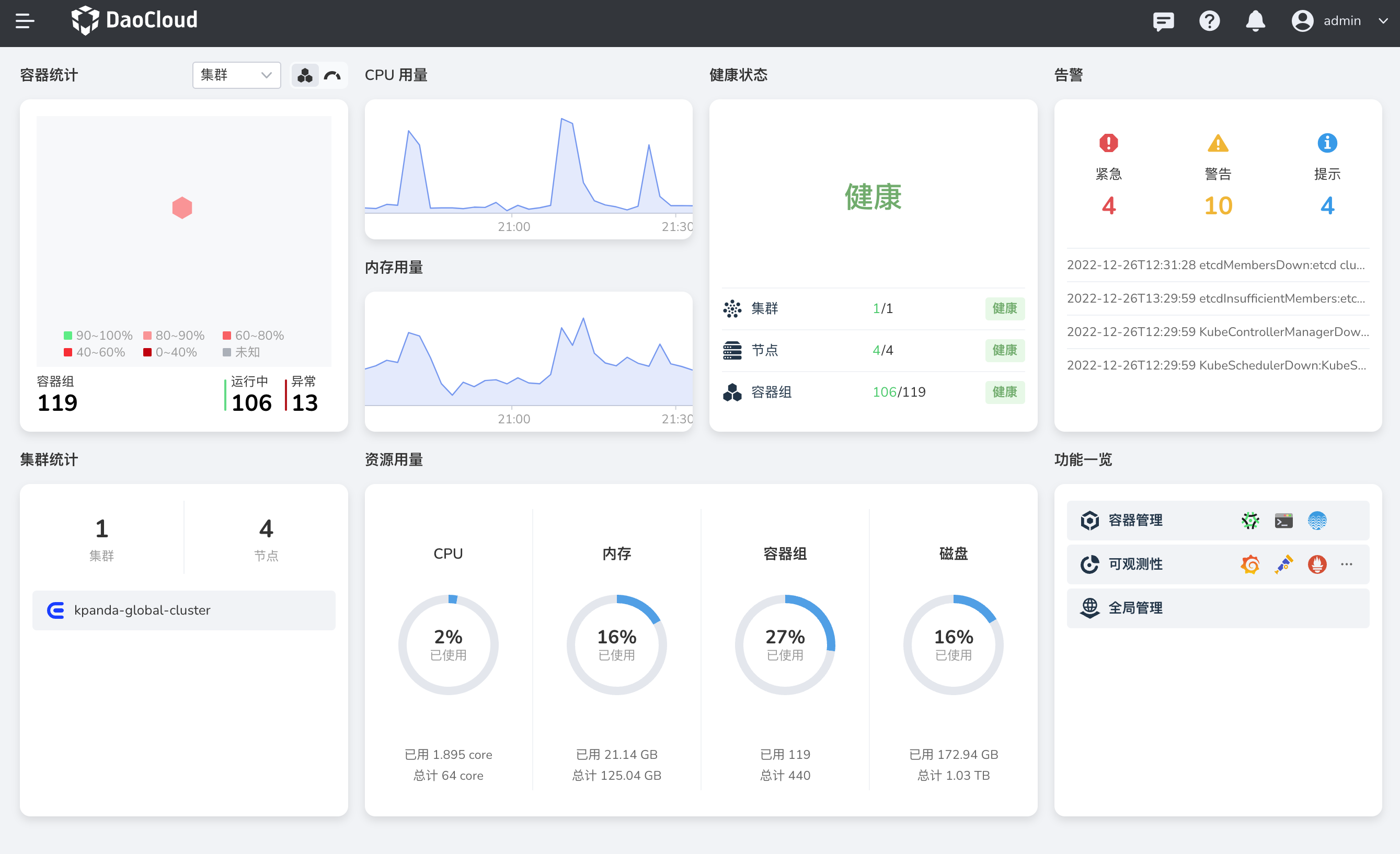

Install DCE 5.0 Community¶

Now that everything is ready, let's install DCE 5.0 Community.

Install Basic Dependencies¶

curl -LO https://proxy-qiniu-download-public.daocloud.io/DaoCloud_Enterprise/dce5/install_prerequisite.sh

bash install_prerequisite.sh online community

Download dce5-installer¶

export VERSION=v0.18.1

curl -Lo ./dce5-installer https://proxy-qiniu-download-public.daocloud.io/DaoCloud_Enterprise/dce5/dce5-installer-$VERSION

chmod +x ./dce5-installer

Confirm the External Reachable IP Address of the Nodes¶

-

If your browser can directly access the IP address of the master node, no additional steps are required.

-

If the IP address of the master node is internal (e.g., in the case of public cloud instances in this example):

- Create a publicly reachable IP address for it in the public cloud.

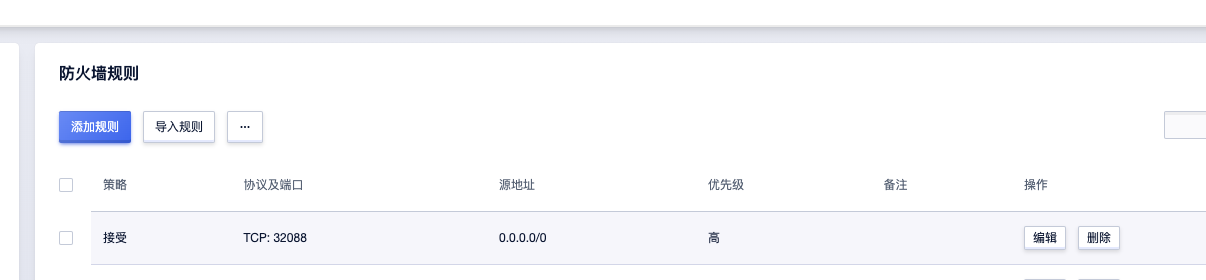

- In the public cloud configuration, allow inbound and outbound traffic on port 32088 in the firewall rules for this host.

- The above port 32088 is the NodePort port of

kubectl -n istio-system get svc istio-ingressgateway.

Run the Installation¶

-

If your browser can directly access the IP address of the master node, run the following command:

-

If the IP address of the master node is internal (e.g., in the case of public cloud instances in this example), make sure the external IP and firewall configuration mentioned above are ready, and then execute the following command:

Note: The above port 32088 is the NodePort port of

kubectl -n istio-system get svc istio-ingressgateway. -

Open the login page in your browser.

-

Log in to DCE 5.0 with the username

adminand passwordchangeme.

Download DCE 5.0 Install DCE 5.0 Free Trial